Here we are at the last project of the term for the first semester of my MSBA degree at Wake Forest. And now is a great time for a failed model building experience. The assignment was to build a logistic regression model (or random forest/gradient boosting if one wanted to give it a try) to predict if loans would default. We had about 70,000 observations of 18 variables to train the model with and another set of about 30,000 to validate the results.

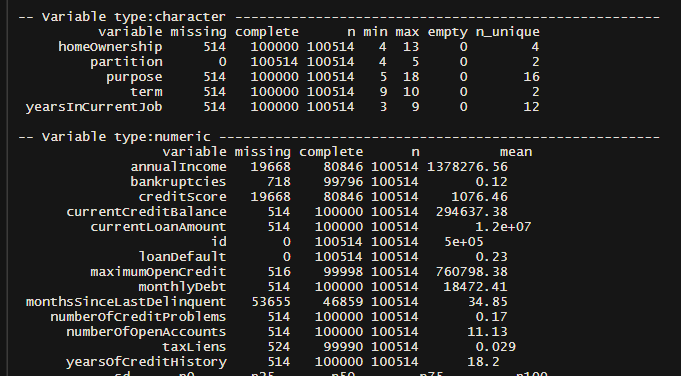

First off, this was real-world data so it was…kind of a mess. Just look at all those missing variables.

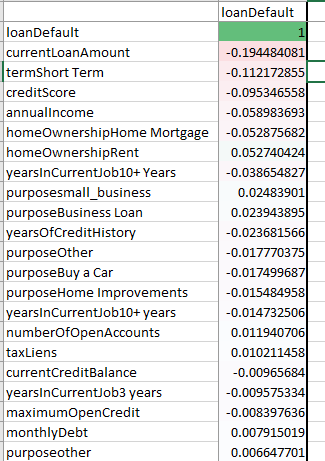

And there weren’t a lot of strong correlations to work with:

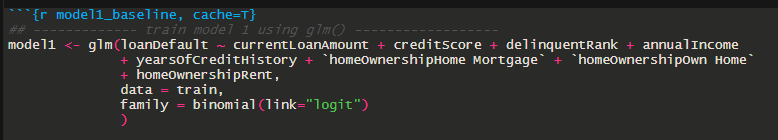

So on to a quick logistic regression to start as a baseline. Let’s work with the first few variables as they appear to be somewhat correlated. Credit score and term Short seemed to have a little correlation with each other, but not enough to worry about. So here’s the first pass at the model:

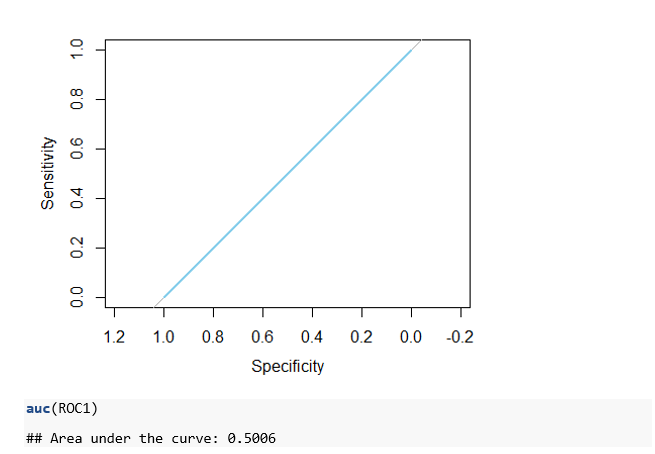

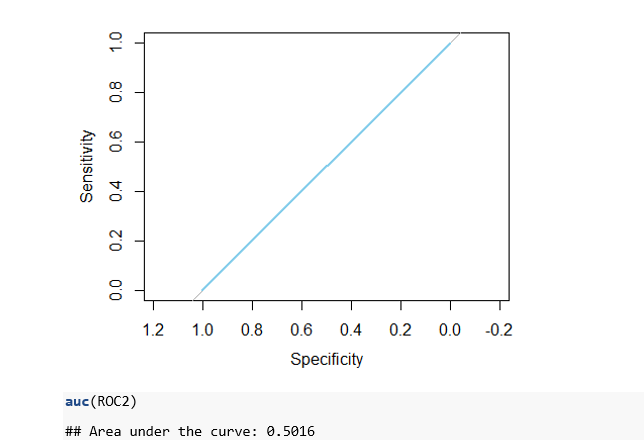

And here’s the ROC (Receiver operating characterstic) curve. Oof.

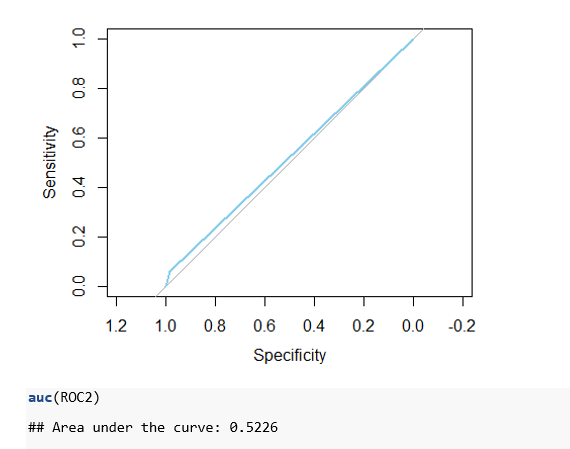

We’d like to see the light blue line above the gray line across the center. The higher above the light gray line the better the model. This particular curve indicates that we have a fairly worthless model.

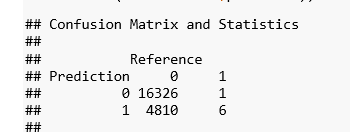

And the confusion matrix? Oof.

So we predicted 6 defaults correctly and missed on 4,800. Great!

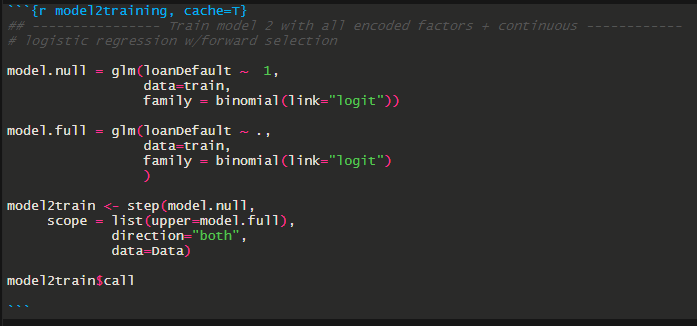

Using stepwise selection on all the variables wasn’t much better. Or…at all better.

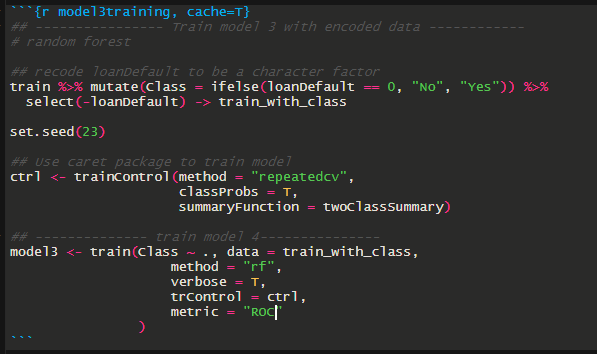

Let’s see how random forest works out.

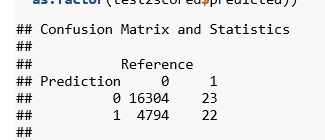

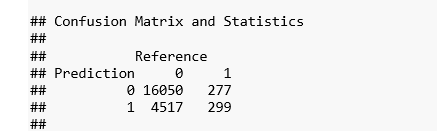

Well that’s a little better, but still not nearly good enough. And we’re getting nearly as many false positives as hits.

So what’s going on here? Well remember that messy data. I clearly did a poor job cleansing and transforming it into something useful. But that’s valuable information to have. So if this were a real world scenario we’d just head back to the top and see if we can clean that data a bit more.

But this being an assignment for class means I get to turn in a report that says I failed to build a good model. Which is fine for class as learning the steps matters more for class than getting a great model. Hopefully next time will go a little more smoothly.